From September 2008 to August 2009, Carnegie Mellon graduate student Abe Othman ran a prediction market to forecast when CMU’s two new computer science buildings, Gates and Hillman, would open. Abe designed the market to predict not just the magic day, but the likelihood of every possible opening day (in other words, the full probability distribution), at the time making his the largest prediction market built in terms of the number of outcomes.

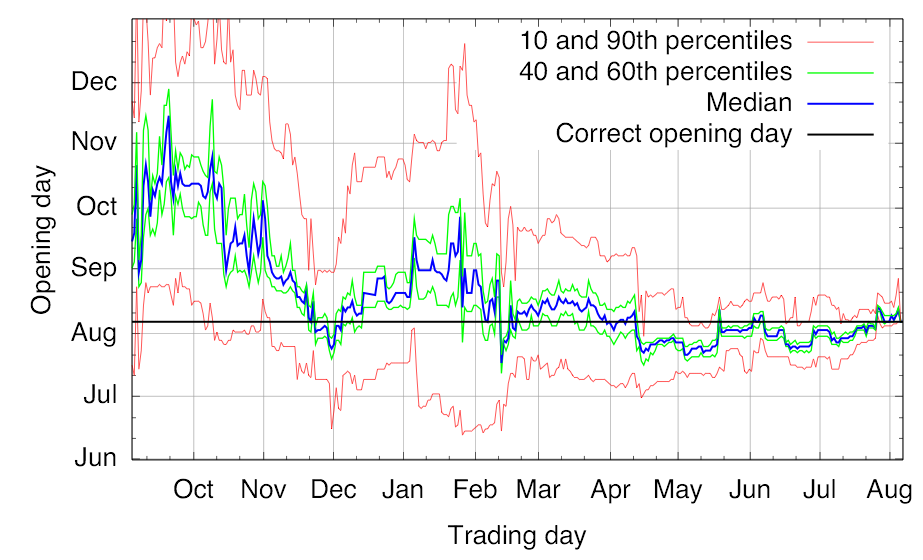

Now Abe created a fascinating video showing the evolution of prices over time in his market. You can see qualitatively that the thing actually worked, zeroing in closer and closer to the actual opening day as the market progressed.

Figure 3 on page 7 of Abe’s paper with Tuomas Sandholm in the 2010 ACM Conference on Electronic Commerce conveys similar information.

Despite plenty of precedent, and despite increasing evidence that non-market methods do surprisingly well too,* I still find it astonishing to see a bunch of people play a subtle betting game for nothing but bragging rights or a small prize and end up with something reasonably intelligent.

By implementing a working market used by over a hundred CMU students, Abe learned a great deal about practical yet important details, from the difficulty of crisply defining ground truth (when exactly is a building officially “open”?) to the black art of choosing the liquidity parameter of Hanson’s market maker.** Abe independently created an intuitive interval betting interface similar, and in some ways superior, to our own Yoopick interface and Leslie Fine’s Crowdcast interface. Abe went so far as to interview his top traders in great detail to learn about their strategies, which ran the gamut from building automated statistical arbitrage agents to calling construction crew members to learn inside information. Abe observed that interval betting using Hanson’s market maker leads to very “spiky” prices. Starting from this informal observation, Abe was able to actually prove an impossibility result of sorts that any price function with otherwise reasonable properties must be spiky in a formal sense. See Abe and Tuomas’s paper for the details.

__________

* Our paper “Prediction without markets”, by Sharad Goel, Daniel Reeves, Duncan Watts, and me, will be published in the 2010 ACM Conference on Electronic Commerce.

** Abe has now developed a flexible market maker that automatically adjusts liquidity to match trader activity. The paper, by Abe, Tuomas, Daniel Reeves, and me, will also be published in the 2010 ACM Conference on Electronic Commerce.

I’d be interested in seeing Abe’s approach. My approach to increasing liquidity with an AMM was just to integrate the AMM with a book order market. As more people enter the market, the number of orders in the book increases, too, which gives more chances for someone to find a matching order, so the AMM doesn’t end up doing all the selling.

Of course, this is much harder to do when you want oodles of outcomes. The implementation in the article cited above only works for binary markets. I’m currently working (in the background) on an implementation for multi-outcome markets. But I’m not sure my new solution would be a reasonable approach in the cases of oodles of markets.

We started with Todd Preobsting’s idea to charge transaction fees and feed those back into the liquidity parameter b. But what Abe came up with was much more elegant. The b parameter automatically increases proportionally to trading volume and everything fits into one closed-form cost function. Prices sum to greater than 1, but that makes sense: it’s an implicit form of transaction fee (“vig” in bookmakers’ terminology). If prices do not end up too extreme, the market maker is provably revenue positive. With this market maker, liquidity is “scale free”. Paper should be available April 1.